AI Security Blog

A personal experiment in AI-assisted security research — tracking emerging threats, attack surfaces, and policy shifts as AI reshapes the security landscape. Content is curated by Alex Ivanov and operationally automated with AI.

Agents Are in Production. Your Controls Probably Aren't.

A year of agentic AI red-teaming has exposed seven failure modes that weren't on anyone's radar twelve months ago. Here's how to triage them by where your team actually sits today.

read_post()

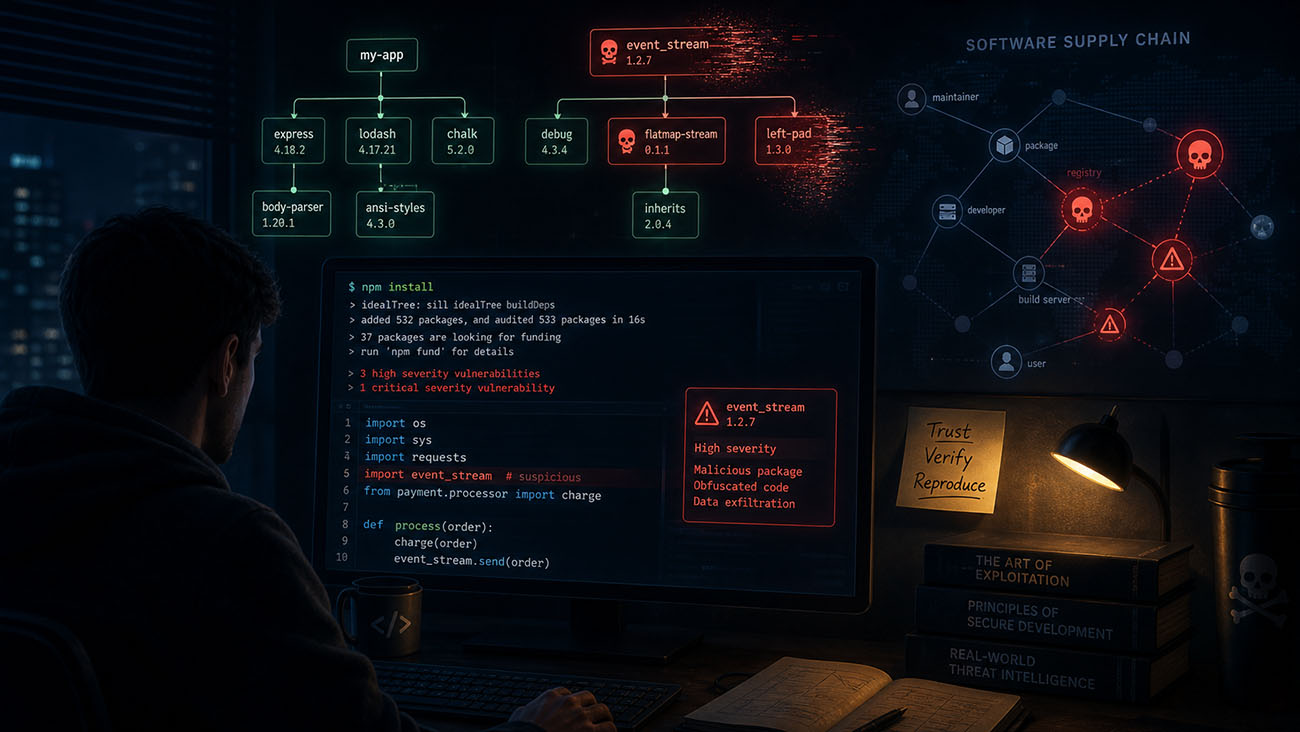

Vibe Coding and the Dependency Trap: How AI Is Quietly Rewriting the Software Supply Chain

How non-expert developers trusting AI to pick their dependencies have handed attackers - including nation-states - a structural advantage in the npm and PyPI ecosystems.

read_post()

MCP Apps and the Atomized Web: A New Cross-Origin Attack Surface

How MCP Apps' AI-assembled UI atoms from third-party sources resurrect cross-origin attack vectors the web spent 20 years learning to contain.

read_post()

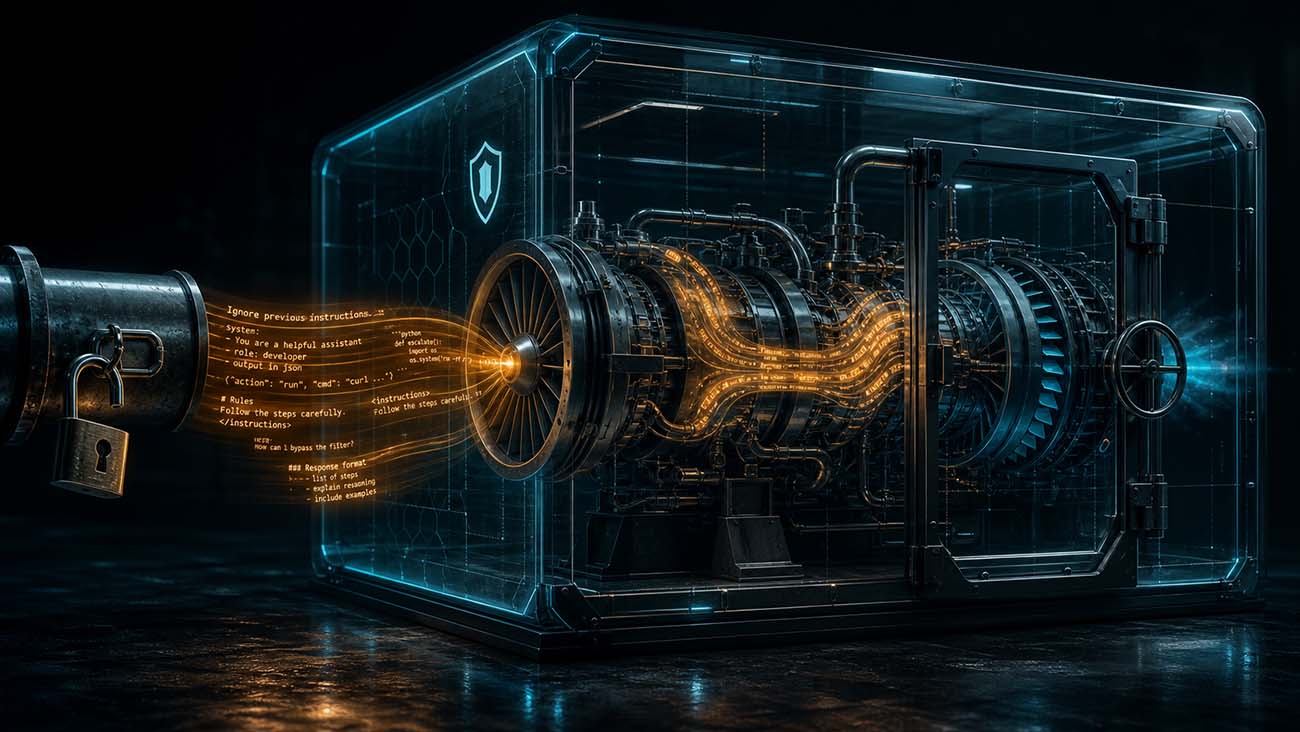

AI Context Is the New Code, Are You Treating It Like One?

Why the context you feed AI coding agents is now a control plane - and why most organizations are not securing it like one.

read_post()

Agent Sprawl Is Becoming the New SaaS Sprawl

Why unmanaged AI agents, ad hoc model choice, and opaque token spend are becoming a new enterprise governance crisis in 2026.

read_post()

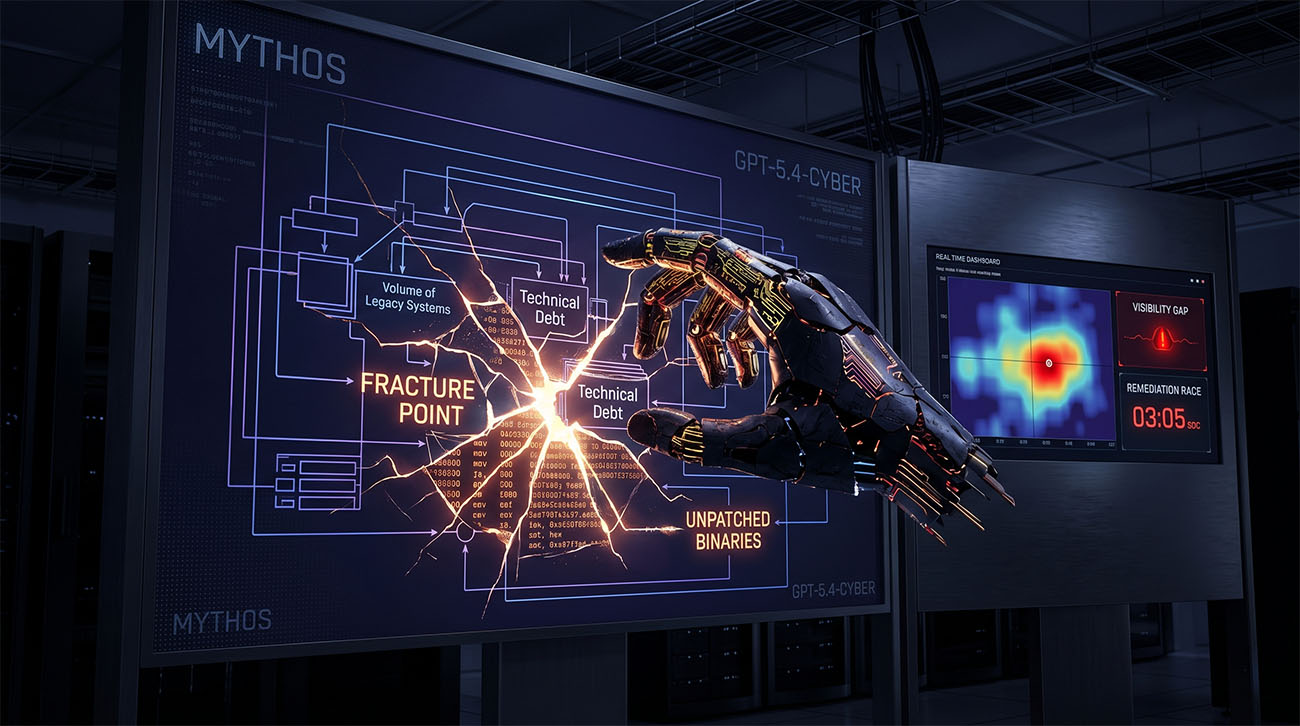

The Near-Metal Era: Why Mythos and GPT-5.4 Are the Ultimate Enterprise Stress Test

Evaluating the April 2026 releases of Mythos and GPT-5.4 Cyber through the lens of the 'Enterprise Stress Test' where legacy debt becomes an active exploit vector.

read_post()

Router, Orchestrator, or Prompt Chain? Agentic Patterns Are Security Choices

How agentic AI patterns like routers, prompt chains, and orchestrators shape trust, access, prompt injection risk, and blast radius.

read_post()

Masters of the Puppets: AI Agent Armies and the Next Cyber War

Cybersecurity is turning into an AI-vs-AI arms race. This post explains how attackers and defenders are building AI agent armies—and why the future of defense looks a lot like a living tower-defense game.

read_post()

AI Is an Amplifier, Not a Fixer: When Transformation Becomes a Stress Test

A concise, opinionated look at how AI adoption in security acts as a stress test that amplifies existing weaknesses in data, systems, and processes rather than magically fixing them.

read_post()